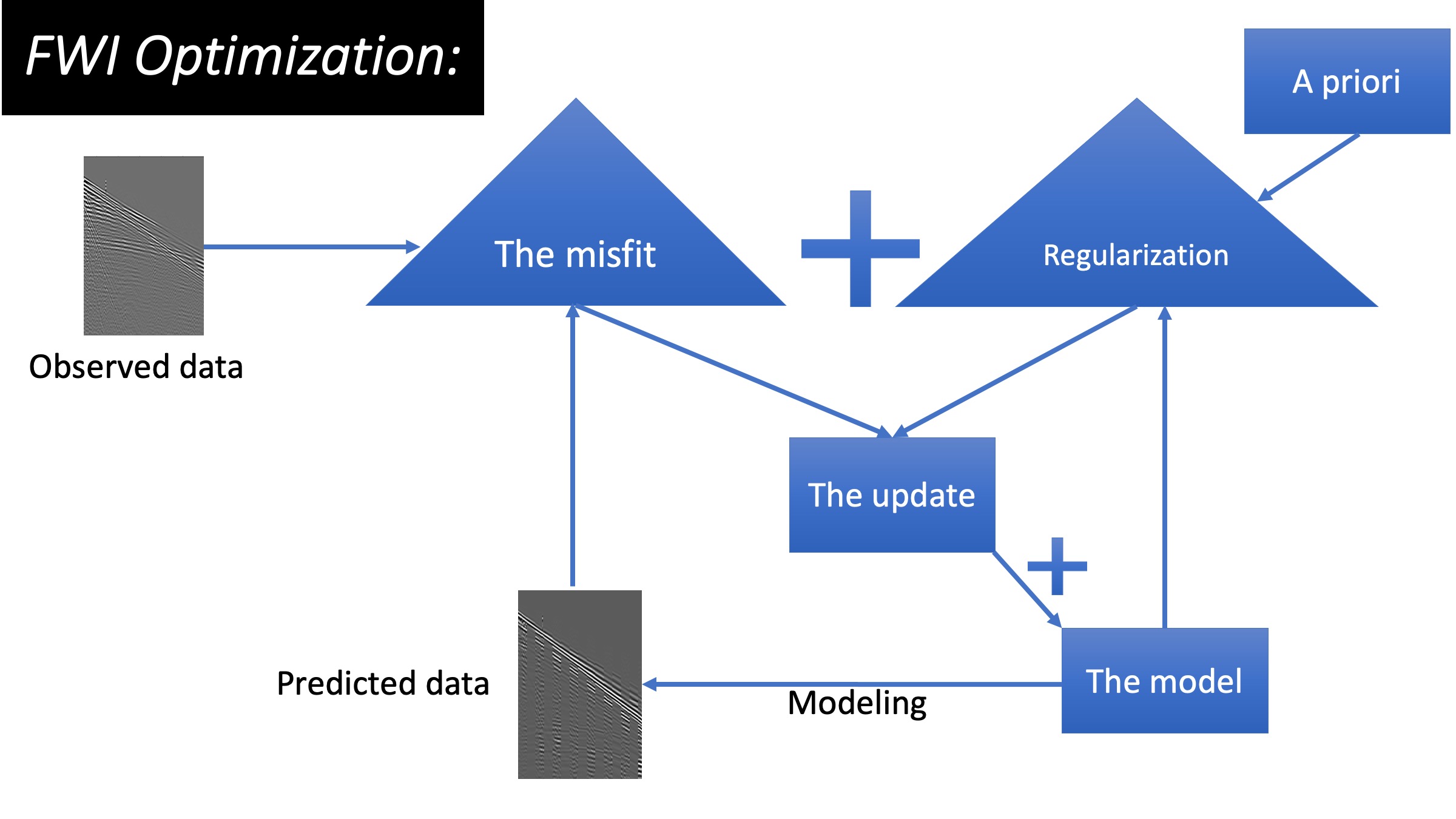

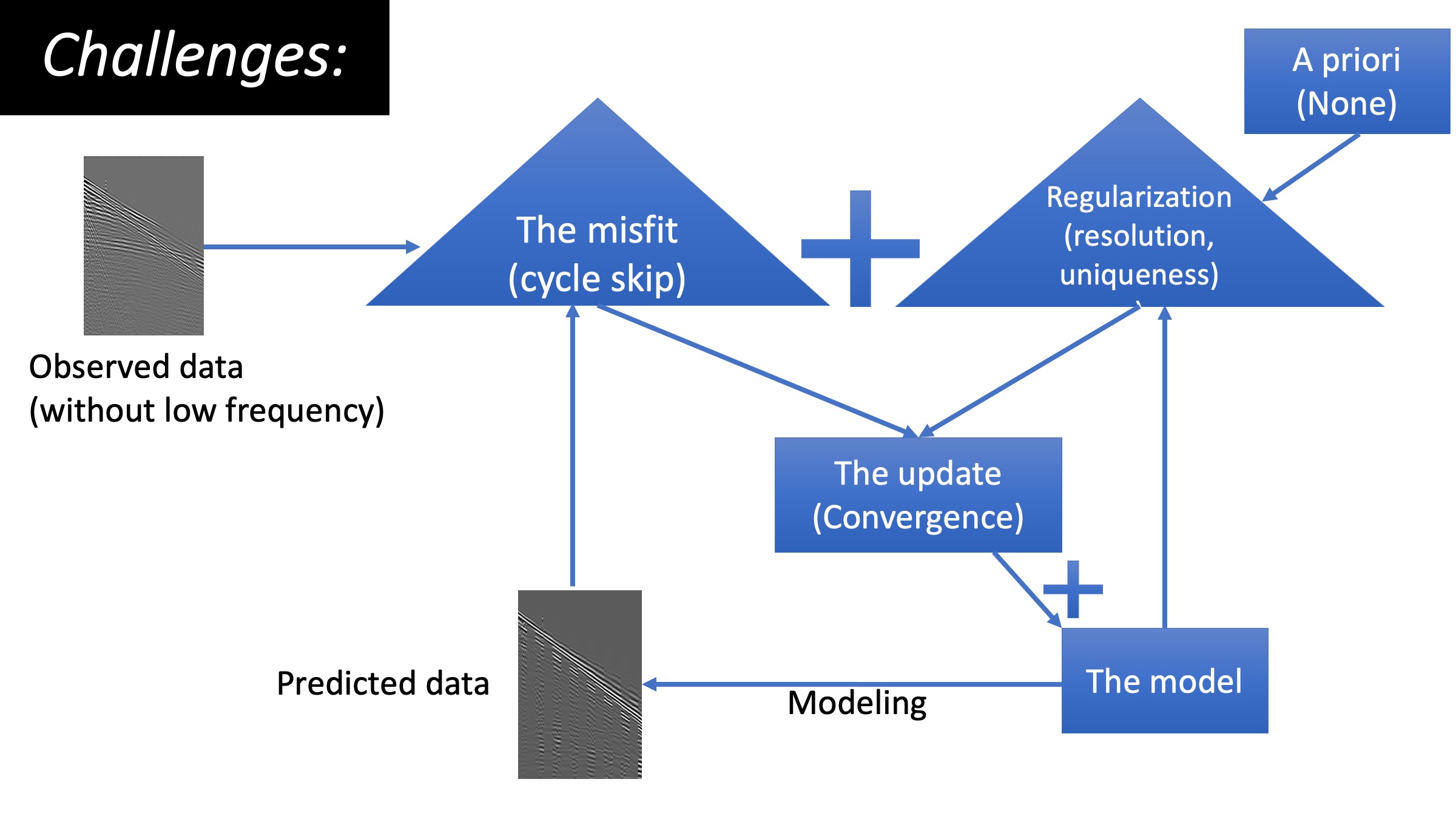

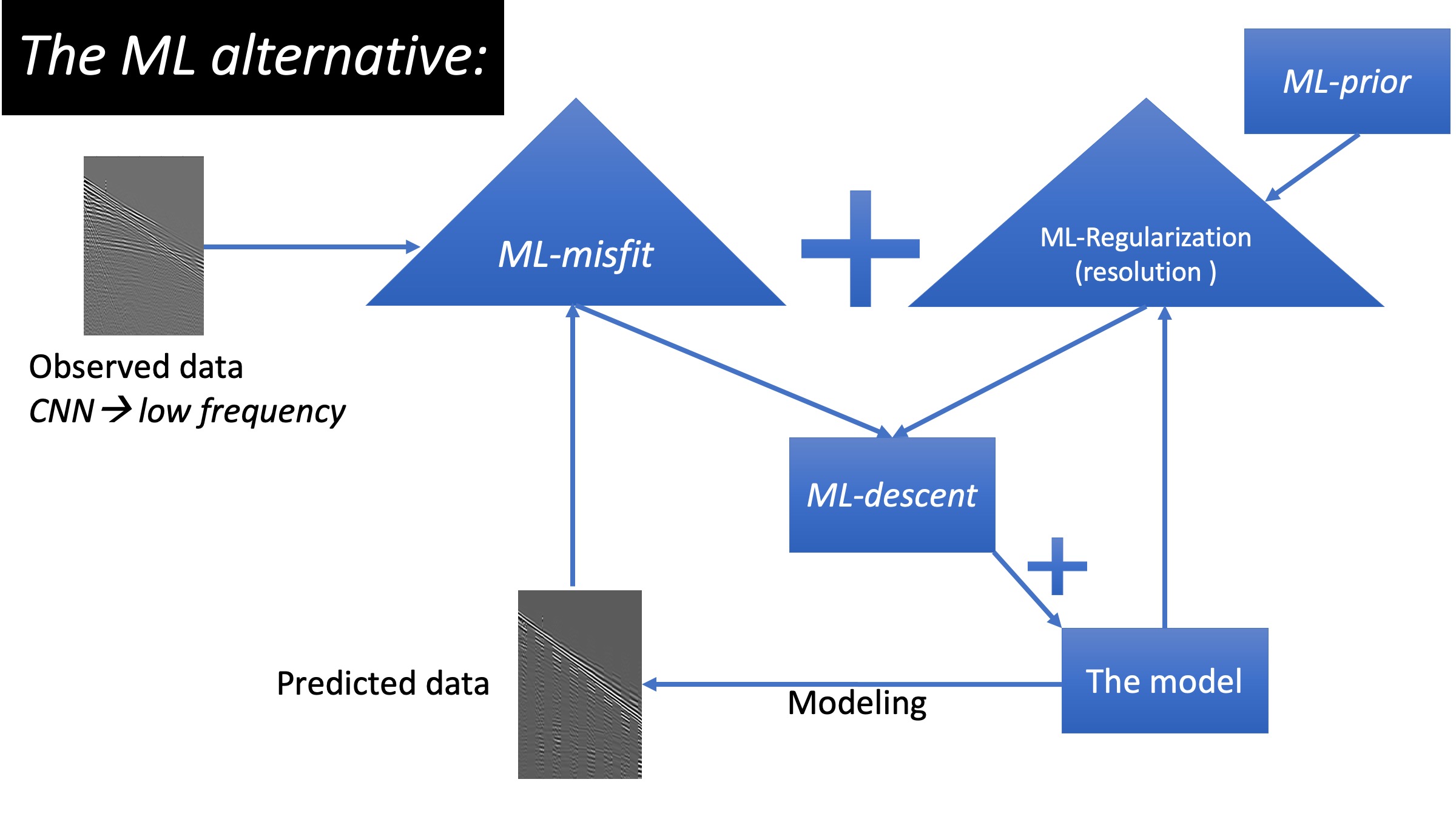

The shift to ML solutions for Full waveform inversion in three diagrams

- The objective function: we train a neural network (NN) to replace the classic misfit, we refer to it as the ML-misfit.

- The regularization: we train an NN to map information from a well to act as an a priori for FWI, we refer to as the ML-prior.

- The update: we train an NN to be an optimal conditioner to the gradient, we refer to it as the ML-descent.

The ML-misfit

The ML-prior

The ML-descent

A recently developed ML-descent methodology utilizes both an auto-encoder and a recurrent neural network (RNN) to use the history information of gradients to provide a better update (Sun and Alkhalifah, 2020b), like the well-known L-BFGS. However, unlike L-BFGS, our network learns from the data and learns to optimize the update from the data. The input to the RNN are the gradients at each iteration and the output is the update, both in the latent space. The network trained on patches from the Marmousi model performed much better than L-BGFS on the overthrust model. We converge to a high-resolution model with fewer iterations.

References

Li Y., Alkhalifah T., and Zhang Z., (2020), "High-Resolution Regularized Elastic Full Waveform Inversion Assisted by Deep Learning", Conference Proceedings, 82nd EAGE Annual Conference & Exhibition Workshop. DOI: 10.3997/2214-4609.202010281.

Sun B., and Alkhalifah T., (2020a), "ML-Misfit: Learning a Robust Misfit Function for Full-Waveform Inversion Using Machine Learning", King Abdullah University of Science and Technology, arxiv:2002.03163.

Sun B., and Alkhalifah T., (2020b), "ML-descent: an optimization algorithm for FWI using machine learning", GEOPHYSICS: accepted. DOI:10.1190/geo2019-0641.1.

Li Y., Alkhalifah T., and Zhang Z., (2020), "High-Resolution Regularized Elastic Full Waveform Inversion Assisted by Deep Learning", Conference Proceedings, 82nd EAGE Annual Conference & Exhibition Workshop. DOI: 10.3997/2214-4609.202010281.

Sun B., and Alkhalifah T., (2020a), "ML-Misfit: Learning a Robust Misfit Function for Full-Waveform Inversion Using Machine Learning", King Abdullah University of Science and Technology, arxiv:2002.03163.

Sun B., and Alkhalifah T., (2020b), "ML-descent: an optimization algorithm for FWI using machine learning", GEOPHYSICS: accepted. DOI:10.1190/geo2019-0641.1.